Case Studies and Career Highlights

Here I am just holding a magnifying glass on a few career defining moments.

These are not all technical but give an insight into some hopefully interesting points of my career.

Symantec - Project Athena (2018).

Where it all ended in Champagne!

Without a doubt my favourite career moment and a huge success which rippled all over Symantec worldwide. The champagne (pictured) was well and truly deserved for this one!

Without a doubt my favourite career moment and a huge success which rippled all over Symantec worldwide. The champagne (pictured) was well and truly deserved for this one!

Project Athena was a huge integration project that Symantec performed to integrate the recently puchased Blue Coat Systems (for whom I originally worked) into their existing infrastructure. I was called on from my position in Business to oversee the IT integration regarding the move to the new Salesforce instance, ensuring that everything functioned as intended, and flew to Silicon Valley for several weeks to oversee all of this.

It is important to understand the company hierarchy here. Although a part of business, my team - Licensing - was very tech heavy and we looked after our own code, keeping it away from external IT departments and very close to us. Licensing was always a part of Business and not IT due to our close ties with what the business required.

The initial requirement was to spec out all the points where our systems reached into the systems run by IT - largely Salesforce - which was our ultimate source of truth. During the actual release I sat with IT throughout the testing cycles, release and of course smoke testing to ensure everything ran smoothly and be on hand should anything not function correctly.

To give an example of the sort of things we needed to work on: We used to send out 'cold standby' appliances so that in the event of an issue with a production appliance the customer could just swap out an old unit for a new one. The cold standby had its own serial number and was not licensable itself, so we implemented a feature where all the active components and subscriptions from the faulty appliance could be transferred to the cold standby and the serial numbers would swap over - the customer could then license their standby and automatically get the feeds they had purchased for the original appliance. This had to happen in our systems but also be mirrored in the salesforce database - this was a lot of data swapping. These changes also had to propogate through other databases sucha s Oracle and various other endpoints so people such as the all important customer care teams could deal with any issues.

As Symantec's Salesforce instance worked in a completely different way to that of Blue Coat, we had new code to sync between them - in fact there were new code and sync processes all the way down and back up to the various endpoints. We had to test this firstly in the test environment - this was a complete environment with a duplicate Salesforce, duplicate licensing servers, duplicate subscription download servers, duplicate customer service portal etc. This also had to be smoke tested at release time so I had to sync with the Salesforce team and the subscription download team at this point who were all either present for the release or otherwise available for checking.

I also had to check documentation to be given out to customer services so our staff would know how to assist customers in the event of any issues going forward. License swap was one part of a large collection of services that we provided. Our seven servers included a validation server which appliances would call to hourly to ensure they were allowed to be running, There were various services for issuing SSL certificates as all our authentication from appliances used these certificates as part of a wider trust package. Load balancers fronted all our servers, themselves run by another team so we had to sync up with that team as well. Absolutely everything had to be tested and double checked.

My team was one of many teams all working together to facilitate the entire project. An entire building was given over to the process with many hundreds of people working together and independently in a huge choreographed operation.

Specifically I sat with IT throughout, which took place over several weeks with one core release weekend at head office. It was hard work but great times were had and due to how closely we were working, lifetime friends were made who I am still in touch with many years later. We even cracked open a bottle of champagne upon successful completion - yes that's me opening it!

This is perhaps my proudest achievement due to the high visibility it had, and although I haven't focussed much on technical detail here, my name was suddenly known in every office worldwide. Its an experience which was hard work but is very fondly remembered - despite the ridiculous lack of sleep at times - I was still dealing with emergency response situations throughout!

Symantec transition to Broadcom

When a unique id is no longer unique

One particularly notable thing that changed after Symantec was acquired by Broadcom is that they wanted to sell our services in a very different way. Rather than selling single appliances they wanted to sell bundles of products including appliances and software. Serial numbers always used to be unique, however Broadcom introduced a bundles system where a serial number could contain a any number of appliances and subscriptions, and they were to be monitored by metrics such as number of cores over the total number of appliances rather than per appliance. Rather than buying 4 appliances the end user might purchase 100 cores to be used over an unspecified number of virtual appliances. And you can probably see where this is going already.

What happens when your unique identifier is no longer a unique identifier? How do you deal with databases full of millions of records using this as the unique identifier?

Validation is the area I will focus on here. This previously worked on a token based authentication system together with SSL certificates, whereby appliances would swap secure tokens with us so we would know they were who they said they were. If we got more calls home hourly than were expected we'd know they'd been duplicated and could shut them off, this was no longer the case.

I implemented a new system based on unique appliance identifiers instead and had to work with the appliance software teams to implement this on the appliances before we could write any code. The new validation code would read data sent by the appliances themselves regarding how many cores they had and these could be aggregated over various periods of time. We could then push warning messages to the appliances if they had gone over or were coming close. I implemented thresholds whereby appliances could go over a percentage of what they had purchased allowing them time to track down and turn off older appliances. I had to implement reporting both internally and to the customer for how many cores they had running together with their IP addresses and unique identifiers.

This created a number of issues - all of our internal reporting had to be re-written. SQL queries which were previously fairly simple suddenly became multi-tier stacked sub-queries which I was tasked with writing.

Here is an example of one of those queries (tablenames and identifying fields changed of course):

WITH difference_in_seconds AS (

SELECT DISTINCT appliance_identifier, appliance_name, public_ip_address, event_time, TIMESTAMPDIFF(SECOND,event_time,NOW()) AS seconds FROM tablename WHERE serial_number = '$serial' AND appliance_identifier = '$app_id' ORDER BY event_time DESC),

differences AS (

SELECT appliance_identifier, appliance_name, public_ip_address, event_time, seconds, MOD(seconds, 60) AS seconds_part, MOD(seconds, 3600) AS minutes_part, MOD(seconds, 3600 * 24) AS hours_part FROM difference_in_seconds)

SELECT appliance_identifier, appliance_name, public_ip_address, event_time, CONCAT( FLOOR(seconds / 3600 / 24), ' days ', LPAD(FLOOR(hours_part / 3600), 2, 0), ':', LPAD(FLOOR(minutes_part / 60), 2, 0), ':', LPAD(seconds_part, 2, 0), ' ago') AS last_seen, seconds/60 as tsd FROM differences

What this allowed me to do was show appliances in order from how recently they had connected and tailor the display to something logical and easy to read. A simple issue before - simply select the number of connections we've seen from a particular serial number. Chaing the uid did not just warrant database changes - it changed, and complicated, pretty much, everything!

The core of the code where this was used is below. There are a number of things not included with this - str_to_color was an interesting function which I wrote which would turn a string of text into an HTML colour in hex format. This was for making reports more readable - each individual appliance was colour coded in the report to make it easy to see the points at which it had connected. Even this took a bit of work - all the colours came out vaguely similar at first, so code was written to make the colours as different as possible for ease of interpretation. Maybe I should have focussed on this part instead. The takeaway is though, just how complex things got very quickly to the point that we had to do things like this.

sub event_log (){

my $serial = shift;

my $aid = shift;

my $unlimited = shift;

my $db_name = 'my_database';

my $dbh_handler = config::db_connect($db_name);

my @fields=();

# For all fields, do a desc

my $desc_query = "DESC tablename";

my $sth = $dbh_handler->prepare($desc_query);

$sth->execute;

while (my $row = $sth->fetchrow_hashref){

if ($row->{Field} ne "id" && $row->{Field} ne "serial_number"){

push (@fields, $row->{Field});

}

}

# Or for some fields, just list

@fields = qw ( event_time appliance_identifier public_ip_address ppliance_name tsd last_seen);

@print_fields = qw ( event_time appliance_id public_ip appliance_name how_long

ago);

#@print_fields = qw ( event_time appliance_id grace_counter green last_token

matched second_last

matched public_ip appliance_name how_long

ago);

# History table

#my $sql_query = "SELECT * FROM tablename WHERE serial_number = '$serial' ORDER BY id DESC";

my $sql_query = "SELECT event_time, TIMESTAMPDIFF(MINUTE,event_time,NOW()) AS tsd, appliance_identifier, public_ip_address, appliance_name FROM tablename WHERE serial_number = '$serial' ORDER BY id DESC";

$sql_query = "WITH difference_in_seconds AS ( SELECT DISTINCT appliance_identifier, appliance_name, public_ip_address, event_time, TIMESTAMPDIFF(SECOND,event_time,NOW()) AS seconds FROM tablename WHERE serial_number = '$serial' ORDER BY event_time DESC), differences AS ( SELECT appliance_identifier, appliance_name, public_ip_address, event_time, seconds, MOD(seconds, 60) AS seconds_part, MOD(seconds, 3600) AS minutes_part, MOD(seconds, 3600 * 24) AS hours_part FROM difference_in_seconds) SELECT appliance_identifier, appliance_name, public_ip_address, event_time, CONCAT( FLOOR(seconds / 3600 / 24), ' days ', LPAD(FLOOR(hours_part / 3600), 2, 0), ':', LPAD(FLOOR(minutes_part / 60), 2, 0), ':', LPAD(seconds_part, 2, 0), ' ago') AS last_seen, seconds/60 as tsd FROM differences";

if ($aid){

$sql_query = "WITH difference_in_seconds AS ( SELECT DISTINCT appliance_identifier, appliance_name, public_ip_address, event_time, TIMESTAMPDIFF(SECOND,event_time,NOW()) AS seconds FROM tablename WHERE serial_number = '$serial' AND appliance_identifier = '$aid' ORDER BY event_time DESC), differences AS ( SELECT appliance_identifier, appliance_name, public_ip_address, event_time, seconds, MOD(seconds, 60) AS seconds_part, MOD(seconds, 3600) AS minutes_part, MOD(seconds, 3600 * 24) AS hours_part FROM difference_in_seconds) SELECT appliance_identifier, appliance_name, public_ip_address, event_time, CONCAT( FLOOR(seconds / 3600 / 24), ' days ', LPAD(FLOOR(hours_part / 3600), 2, 0), ':', LPAD(FLOOR(minutes_part / 60), 2, 0), ':', LPAD(seconds_part, 2, 0), ' ago') AS last_seen, seconds/60 as tsd FROM differences";

}

if (!$unlimited){

$sql_query .= " LIMIT 1000;";

} else {

$sql_query .= " LIMIT 2000;";

}

$sth = $dbh_handler->prepare($sql_query);

$sth->execute or die "SQL Error: $DBI::errstr\n";

do_print("");

do_print("");

my $counter=0;

foreach my $header(@print_fields){

my $print_header = ucfirst($header);

$print_header =~ s/_/ /g;

do_print("" . $print_header . " ");

$counter++;

}

do_print(" ");

my $last_colour = undef;

my $current_unit="mins";

my $last_unit="mins";

my $hour_counter=1;

my $last_hour_counter=1;

while (my $row = $sth->fetchrow_hashref){

#print Dumper $row;

do_print("");

$tsd = $row->{'tsd'};

my $unit = "mins";

if ($tsd > 60){

$unit = "hours";

$tsd = $tsd/60;

$current_unit="hours";

$hour_counter=int($tsd);

if ($tsd > 24){

$unit = "days";

$tsd = $tsd/24;

$current_unit="days";

}

$tsd = int($tsd);

}

if ($last_unit ne $current_unit){

do_print(" ");

$last_unit=$current_unit;

}

if ($hour_counter ne $last_hour_counter){

do_print(" ");

$last_hour_counter=$hour_counter;

}

foreach my $field(@fields){

if ($field eq "appliance_name" && $row->{$field} =~ /HASH\(/){

$row->{$field} = " (not set)";

}

if ($field eq "tsd"){

#do_print(" " . $tsd . " " . $unit . " ");

} elsif ($field eq "appliance_identifier"){

my $col = str_to_color::string_to_diverse_colour($row->{$field});

do_print(" ▣ " . $row->{$field} . " ");

} else {

do_print(" " . $row->{$field} . " ");

}

}

do_print(" ");

}

do_print("

");

do_print ("

");

}

...

Delerium Music - Building an e-commerce site and ERP solution from scratch!

This is where my career started. The company had been selling online via a downloadable zipped text file which customers could download and email in orders to us. This went live in 1994 before my time. In 1997 when I joined nobody knew anything about selling online and I was the one who originally identified the issue. I wrote a basic shopping cart application in Perl - again using text files - and demonstrated it to the owner who wanted me to take it further. As a result, We were one of the first music retailers on the net!

Over the next ten years this grew to a fully fledged e-commerce system providing an advanced search system, cross-categorisation of product into over 100 genres of music, pre-order system, wishlist, all written by myself from scratch. Of course we needed easy ways to flag product for special promotion in places such as the front page etc which was all slowly built in.

The postage calculator was extremely powerful and worth a mention. It dealt with taking orders of various different types of product including items in stock, pre-orders, out of stock and due in on X date etc, and would calculate costs for multiple packages based on dates product was due in allowing customers to split orders up how they liked. Many customers were happy to wait for product to come in before an entire order was shipped to minimise postage which could get very expensive with heavy vinyl releases. Others wanted things sent as they came and were happy to pay whatever the shipping charges were. All this was meticulously coded in, with tables of data from shipping providers to find the cheapest options, and edit screens to update these prices, relying on the code to do the math behind the scenes. The complexity of suggesting parcel splits based on when we were likely to hit minimum ordering quantities from specialist suppliers and allowing customers to tweak them was something else! An entire mail program was written as well to let customers know when things were delayed and ask them what they wanted to do.

Over the years this grew into an enormous system. It was integrated with the in house MS access database which I later inherited after the original creator left, which was able to automatically create order screens from text file data which could be imported together with producing printable invoices and managing stock control. Other areas of this database included producing royalty statements for artists on the label. It was an entire ERP system and shopping cart solution in one, managing everything from customer data to automatically ordering product from suppliers when shipping targets were realised. No stone was left unturned!

Somewhat in contrast to what people expect, I built and ran this entire system on my own - even down to creating the logo in photoshop as we didn't have a designer - other than back and forth with management for the all important discussions, all the code was mine and came from nothing but a blank text documen, an idea, and a desire to build something worthwhile. It gave me my career. It was a huge success and the web site was even reviewed in Record Collector magazine over a 4 page article they thought it was so good! We were regularly featured in the press - the Guardian were also big fans. BBC Radio 6 loved it and regularly promoted us. For it's time, it was a very cutting edge web site. The categorisation of music product into defined niche genres was also my idea - one that no other company had done at the time - but everybody soon copied!

Also in contrast to what people expect, I was able to keep this going as a contract and work in other places. For a major e-commerce site - we were directly competing with the likes of Amazon for example - who reached out to us on many occasions trying to get us to list our product on their site (to which we said NO every time!) - to be created by one person who isn't even full time, well this was a sign of the times. It was easy back then. Nowadays, people just don't believe what I did. After this I'd work for companies producing far less technicality with a lot more people - front end team, back end team, designer, etc. It was a feat for which I retain a great sense of achievement. I have recently discovered that it has a section on the Delerium Records Wikipedia page here

I was not just a software engineer for this company, I was a think tank, a part of the fabric of the company, able to put my thoughts and ideas in and see them through to realization. It was also a labour of love. For ten years, it was the greatest job in the world. For a short while afterwards the manager and I packaged up the sotware as a platform and licensed it to other companies under the Paragon Digital brand.

GoHentry - Hiring a team and moving a banking appliation in-house

In 2013 I became technical team leader for GoHenry, tasked with hiring a team and pulling all the web development in-house from a third party software house.

This was my first time hiring people directly. Initially the front end was handed to me to work on and allow me to become familiar with how all the tech worked. The third party was on hand to answer questions as I wished and went down to their offices for a coujple of days while they explained the architecture to me.

Once I had gained good understanding of the systems, the next thing to do was write out some technical questions covering the sort of things we needed, and get advertising. My no.1 technical question - the first one I asked - had the greatest impact. I thought this was quite a simple question - 'How do you validate an email address'. I swiftly became amazed at the number of people who could not provide a satisfactory answer to this, or even take a stab at it. I hoped that the terms 'regular expression' or 'pattern matching' would have figured in a response. Even some kind of vague stab such as 'it needs to have an @ sign in it' would have been good. Instead, I was quite taken aback by puzzled looks I received.

Thankfully in time we did find the right people but after a lot of searching! I found an expert PHP Zend1.1 guy who was hired immediately and a great front end programmer too, and with myself spanning the two everything was pulled in house over the next few months. I saw the company go from a few hundred customers up to 100,000 over the course of the next year. From then they have gone from strength to strength and it's been a pleasure to watch them grow. Unfortunately the commute times (up to 2.5hours each way every day) became unfeasible for me long term and would have meant relocation for myself and pack of dogs into London which I was not able to do at the time, but this was a fantastic and memorable experience.

Interesting quirks: Using javascript to run a banking front end provided a unique set of challenges. 0.1+0.2 does NOT equal 0.3 in Jasvascript as I knew! I wrote a custom libary of functions and made sure that everything was passed through these functions for validation. One interesting bug came from a drop down list of months where 08 got turned into Octal, making us wonder why nobody who signed up was born in August! A move to Typescript happened shortly after.

Gonzo Multimedia - A 'fuzzy' search function

Gonzo Multimedia - originally on gonzomultimedia.co.uk - was a web site I built when working for Paragon Digital. They wanted their search functionality to be vastly improved so user input errors could be taken into account.

After some research I implemented something far more complicated than I'd done before using the Jaro-Winkler function. This is a string metric that measures the similarity between two strings, returning a score between 0 (no similarity) and 1 (exact match). As this needed to run as part of a query to a database this function was implemented as a stored procedure in MySQL which could be called from the PHP back end. Essentially it provided a 'match distance' so fuzzy matches could be taken into account and hopefully deal with spelling errors in user input, which it did.

Using some extra PHP code I was able to provide exact results, followed by close results. Even this wasn't so simple. If there were no results with the exact string I would default to the fuzzy matches straight away.

I'm pleased to say that this cleared up all the issues the company had with mis-spelled user input. I have not looked back at this code in a long time, but I did store it on github here: https://github.com/matt-platts/jaro-winkler-search-functions. I have retained a working copy of this on a private url as it's quite an interesting piece of code.

Writing a templating language as part of a framework

This was a fun one. While building a CMS framework, I needed to be able to create templates and drop variables into them. I decided to roll my own here, which resulted in a basic templateing language and a parser for this.

The templates themselves were standard HTML, and everything in the CMS was templated. For example, when displaying a page with lists of records - which could be anything from products in a shop to, say, PDFs for meeting minutes - literally anything - two templates are required. Firstly i needed a template to hold all the records as a recordset display - together with things like next/previous page buttons, and sort and filter functions - such as A-Z up or down, or a search function to only show records from a particular subset. In the case of products this could filter on the product name, or product catetory - pretty much anything.

I came up with a form of notation first of all, and for this I chose the format

{=variable_name}Curly brackets were the most unlikely to be featured within text, yet retained a similarity to other tags used in code such as < and > in HTML.

Swapping out small snippets of code like this is easy, a regular expression such as /{=[a-zA-z0-9_]*}/ is the simple solution - matching the variable placeholder start and end ({= and }) and any number of alphanumeric characters plus and underscore in the variable name.

What would the variable names be however? Well, a certain number were hard coded. Something such as {=sort_a_z} would replace to a selector for which way you'd like the records ordered. In the case of listing records, I used the database field names, so in the case of listing music CDs the template would look something like this:

{=artist} - {-title}. {=format}. {=price} {=image}

{=description}HTML could be coded around the variables, leaving me with a template I could use for each record returned from a database.

As it goes, these templates themselves were stored in a database, in a table simply called templates. Aside from the template name and the HTML template itself, there were other fields such as 'type'. The type of the one above was a 'record template'. This would be used inside a 'recordset template'. All of this would be in a 'content_page' template which sat in a 'site_template' which contained all the sub templates, header and footer templates, menus etc. But I digress.

The Perl or PHP code would deal with loading the main template for the recordset containing other variables such as {=records_per_page_selector}, {=search_input}, {=search_button}, {=filter_by_selector}, and {=record_template:10}.

As you can see above, the last example is different. Becuse any recordset template needed to know which recordset template to use, the regular expression had to change to include the colon and the number - which if you haven't guessed was the ID of the template in which to put the invididual record data from the database auto-increment column.

The regular expression therefore needs to change to something like this:

/{=[a-azA-Z0-9_]*(?::[0-9]+)?}/ and in the code this can easily be found and replaced. A template for each individual record would be expected, and can simply be coded when going through the list of variables found as per this example in Perl: if ($variable =~ /{=record_template:(\d+)} - note the parentheses -these capture the value in /d+ which can be backreferenced - getting this value allows the code to know which template to get from the database.

(As a side note, you may first notice one thing - this means potentially a lot of grabbing of template records from the database. Caching is a whole other ball game, and far simplified by allowing these templates to be dumped out from the database into a text file for example - which which can first be checked, before attempting to make a database query. Due to the nature of this, it started to be come a lot of database queries for even displaying a single page.)

Of course, the devil is in the detail. Before long, as part of my attempt to template *absolutely everything* in my personal mission to create a 'do anything' framework, more and more edge cases crept in, and the regular expression got bigger and bigger. Before long I was embedding variables within other variables, and I started having to use start and end tags such as {=start_tag} ... {=end_tag} and replacing the content inbetween. And of course, what if there was no data in a partiular tag, how would that be treated? Suddenly, I needed to use if and else, but I didn't always need if and else so they had to be optional in some places, and then of course boolean checking wasn't enough and I needed to match values, Or perhaps match this and that but not something else so negatives had to be taken into account, and before I knew it I was writing a whole language.

Here's a regex that deals with the if.. end if syntax taken right out of the code:

/{=if ((?:!?\w+,?)+ ?=? ?\w+)}(.*?){=end[ _]?if}/ims - this can be used to parse content such as this: {=if status = active}

HTML goes here

{=end if}

{=if !draft,published = true}

HTML goes here

{=endif}

{=if field1,field2,field3=somevalue}

HTML for multiple conditions

{=end_if}From here it was a case of seeing if these variables existed in the data I'd pulled from the recordset and dealing with it accordingly. For clarity, in the example above, {=if field1,field2,field3=value} would treat the first two as booleans, the second only if it specifically matched. Each case could be written into the template itself with the corresponding HTML as part of that template. As a result, the templates stopped looking much like templates, which I had originally designed to be in the TinyMCE editor, and more and more like code as logic began to creep into the templates. The codebase itself became extremely abstract, dealing not with any specific content but all these possible conditions and a lot of regular expressions.

Before long, templates contained sub_templates, functions were written if values needed further processing (eg. {=passValueToFunction: varname}), global variables were introduced such as {=global:users_name} and I haven't even started on the security and permissions as making recordsets from a number of database tables available as templated recordsets on web front ends is a whole other ball game. But yes,full view and edit permissions on tables had to be taken into account too.

And that is how a simple idea of templating everything became a project that took over my life for a number of years. It did however make the business of creating some fairly complicated web sites very easy once the underlying code worked, and the job as a whole was a lot more interesting as I was always able to take what I'd done before, do the bulk of the work in that, and then add to the overall codebase for any new functionality which was required. A scenario which went on for many years.

One file which contains some of this regex logic from this CMS can be found on github here.

As for the framework now? It's still running a few web sites and shopping carts on the web. I haven't looked at the code in a number of years, last I remember it once again needed some serious refactoring having outgrown the original intent.

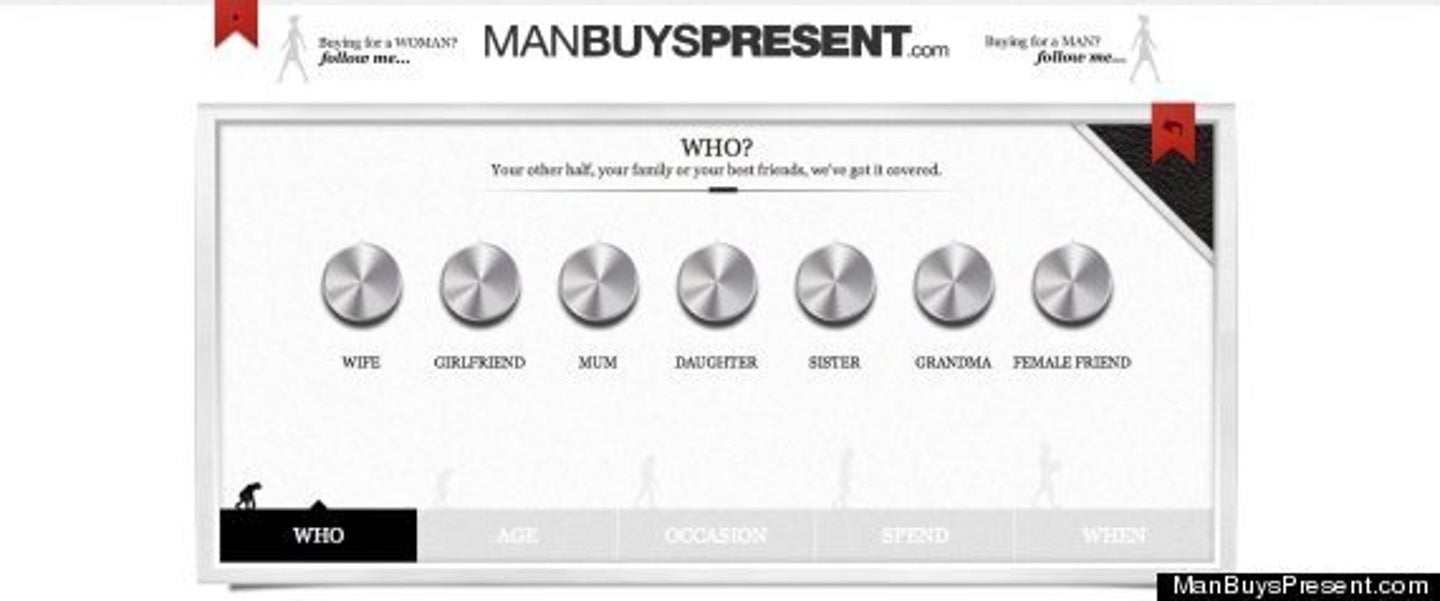

Manbuyspresent.com

Racing against a ticking clock...

Manbuyspresent.com was a very interesting e-commerce site which worked very differently to standard e-commerce. Instead of providing huge ranges of products for customers to browse and pick what they like, this web site had a very specialised interface designed to show as few products as possible, and let the user make yes/no choices until they found their perfect gift. The interface was a very visual javascript interface similar to a fruit machine with three 'wheels'. Customers could hold if they found a product that they thought they liked on one wheel and spin the others. No more than three products were visible on screen at a time.

To narrow down the selection of products shown, users first answered a few simple questions - who are you buying a gift for (relationship and age), what is the occasion, when do you need it by, how much do you want to spend. This allowed them to narrow the product selection down straight away. The rest of the algorithm was derived from making sure that products were shown equally - it could not be too random. Some initial feedback from suppliers was of the type of 'why isn't my product coming up for these selections' etc.

There was one other problem. The advertising was booked, the press reviews were all set up, the launch date was set... and the web site didn't work.

Some of it did... if you didn't mind getting the same products for whatever choices you selected. I was interviewed by a very frustrated company who had given up with their web design house. There were just weeks to go, and a huge list of features requiring implementation. A connection to facebook was supposed to allow users to load in their friends lists for example, and suggest gifts for birthdays etc. It also functioned as a date reminder app too. Could I get through a huge list of bug fixes in a code base I'd never seen before? Would I come into the office every day and work to get it all working by the deadline? Of course I would!

The algorithm for choosing which products to display appeared to be where the previous developers became stuck. As I worked on it, it became incredibly complex very quickly due to the number of metrics involved, and the math to generate the correct products and keep everybody happy was no mean feat. Of all the code I wish I'd kept a sneaky backup of, this would be the one. One of the difficulties was keeping the suppliers happy when the company thought a product was sub-standard. It needed to show, but not be prioritised. Then there were products the company wanted to push which they thought made their web site look good. So we introduced product 'weightings' along with ensuring that product from all suppliers was rotated evenly - every view had to be stored. We also wanted to show products over many categories to avoid things looking too 'samey', and keep track of what users were clicking 'no' to to avoid showing too many similar products.

What I remember from 13 years ago was that the resulting SQL I wrote was enormous. It was definitely sub queries stacked up. There was definitely pre-processing going on over a cron job overnight to generate some weightings. If the delivery dates were very close we needed to ensure that suppliers could fulfill the order - this discounted many for example. Sometimes suppliers would go on holiday, these dates needed to be taken into account as well so we weren't showing anything which couldn't be supplied. Quite what the algorithm was after 13 years I don't completely recall, other than it took days of discussions and work, back and forth with management and lots of testing and checking to get the web site to display an accurate and well rounded range of product that the company and suppliers were happy with. There were a lot of metrics which needed to be taken into account for the simple action of product selection, and even the metrics themselves had weightings on them in order to force an even distribution.

The launch date was met of course - I had to put the hours in but I made sure of it. It was successful for a short while, although is now sadly defunct. I went on to build other web sites for the same management who also ran a restaurant chain which was their main business, before I was offered a full time position with GoHenry.

Writing a pacman game

Just for fun

I didn't write a pacman clone after I'd learned javascript - I learned javascript to write a pacman clone! It seemed like a sensible idea in 1999, and actually I still stand by that - I learned the language inside out. Little did I know I'd still be messing with it years later. There is always something to add, always a new idea to implement, and as code moves on, always a rewrite around the corner. I think I finally put this to bed in 2016, but maybe not. I wrote a page of notes on this one many years ago - you can find this page here.